A water management tragedy is playing out around the Hill Country community of Dripping Springs.

As reviewed in a previous post, the city has predetermined, apparently without any meaningful analysis of options, that it will extend wastewater service to large developments being planned around the city by doubling down on the prevailing 19th century infrastructure model. The plan is to increase capacity at its existing centralized treatment plant and to extend sewer trunk mains to major developments to the east, west and south of the city. The city believes this “requires” them to apply for a permit to discharge effluent from the centralized plant into a branch of Onion Creek, on which are downstream sites of a goodly portion of the recharge of the Edwards Aquifer, and along which reside a goodly number of people who are concerned about the impacts of this discharge on the creek. Recent information indicates that discharges into the creek would also recharge the Trinity Aquifer, a source of their water supply, right about where the wells serving the city are located.

The level of treatment which the Texas Commission on Environmental Quality (TCEQ) appears likely to permit for Dripping Springs will not require reduction of nitrogen. That can lead to algal blooms in the creek, degrading water quality and the visual quality of the riparian environment. Recharge of nitrogen laden water would be a problem in drinking water withdrawn from wells. It appears the permit will also not require consideration of contaminants of emerging concern (CECs), such as pharmaceuticals, also problematic in drinking water. They may also impact life in the stream. Cases of sex changes in fish have been observed in waters receiving discharges containing CECs.

In response to widespread criticisms of their discharge permit, the city asserts that “most” of the effluent would not be discharged, rather would be routed to irrigation reuse. Some on city-owned facilities, but since those are quite limited, mostly it appears by routing it back to the developments generating much of the increased flow to the city’s plant, to be used for irrigation there. However, no reuse lines running to those developments are shown in the city’s Preliminary Engineering Planning Report (PERP), dated July 2013, which a city spokesperson stated is still their “official plan”. Since it’s clear this long-looped, far-flung centralized reuse system would be quite costly, it appears the city has not yet calculated the full cost of their “disposal” focused conventional centralized strategy with reuse just appended on at the end.

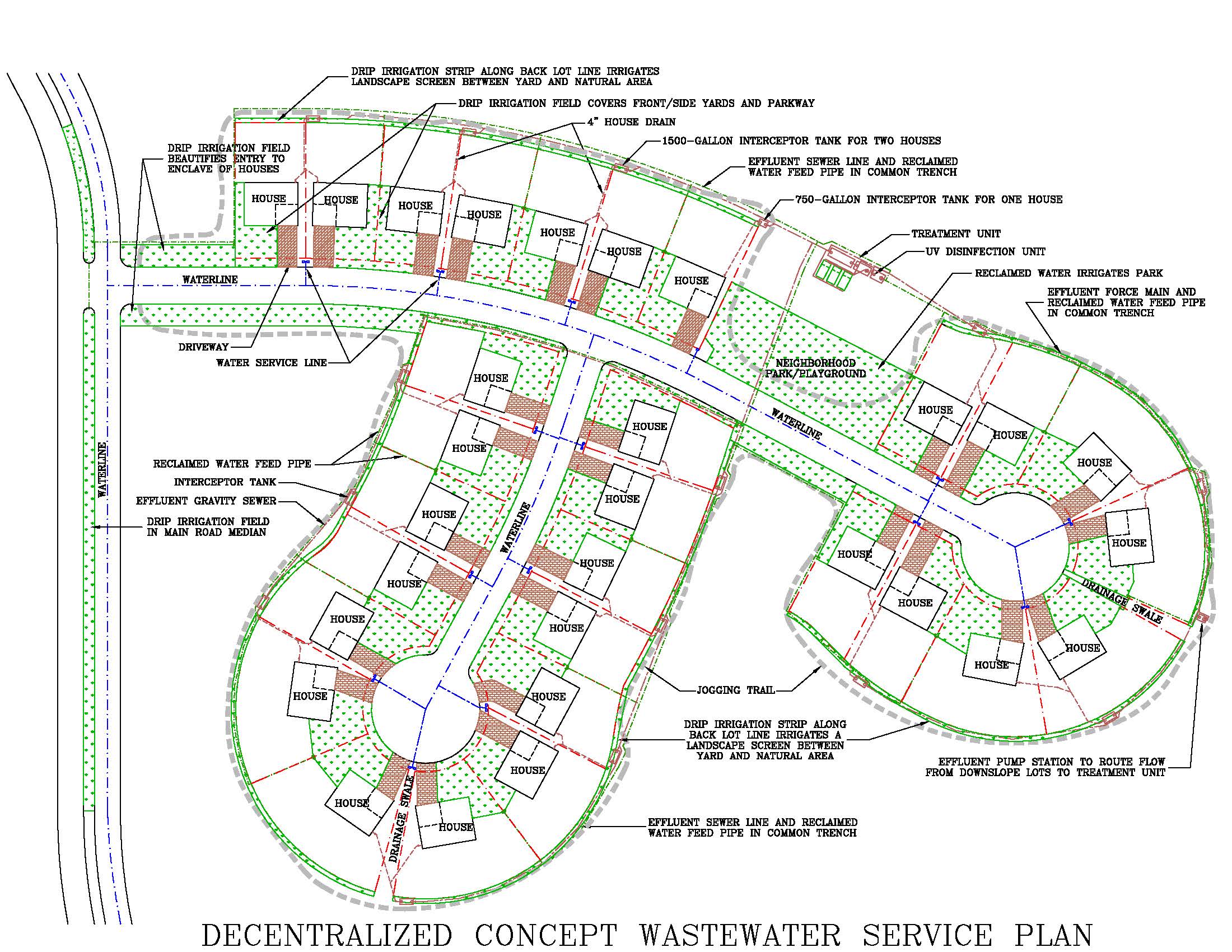

A 21st century option to this very roundabout method was reviewed in “This is How We Do It”. That decentralized concept would obviate the long-looped, high-cost system of pipes and pump stations to first make this water supply “go away” and then to run it back to where it was generated in the first place. Rather, a tight-looped reuse system would be integrated into the development – irrigating the neighborhood where the wastewater is generated – as if reuse were a basic principle of water management, instead of just an afterthought to a disposal-centric system down there at the end of the pipe. Reuse would be cost efficiently maximized, and being designed into the development would not be optional, so would most definitely save on using potable water for irrigation. This is a model which the city and the developers of the large projects around it have so far refused to consider.

Dripping Springs also contends it must centralize all wastewater flows because it aims to implement a direct potable reuse (DPR) scheme, providing additional treatment to bring this wastewater to potable quality and introducing it into the city’s water supply. They assert this is the “ultimate” scheme for reuse of this water. DPR, however, is a rather problematic strategy. The costs would be prodigious, and the city does not own, thus control, the water system that supplies the city; an independent water supply corporation does.

There are also social equity issues with this scheme. Even if it were to integrate its water system into a DPR scheme, that water supply corporation does not, and will not, provide water to some of the outlying development, so in the process of serving that growth, the existing citizens would be expected to drink the reclaimed water produced by those who generate it but won’t have to drink it.

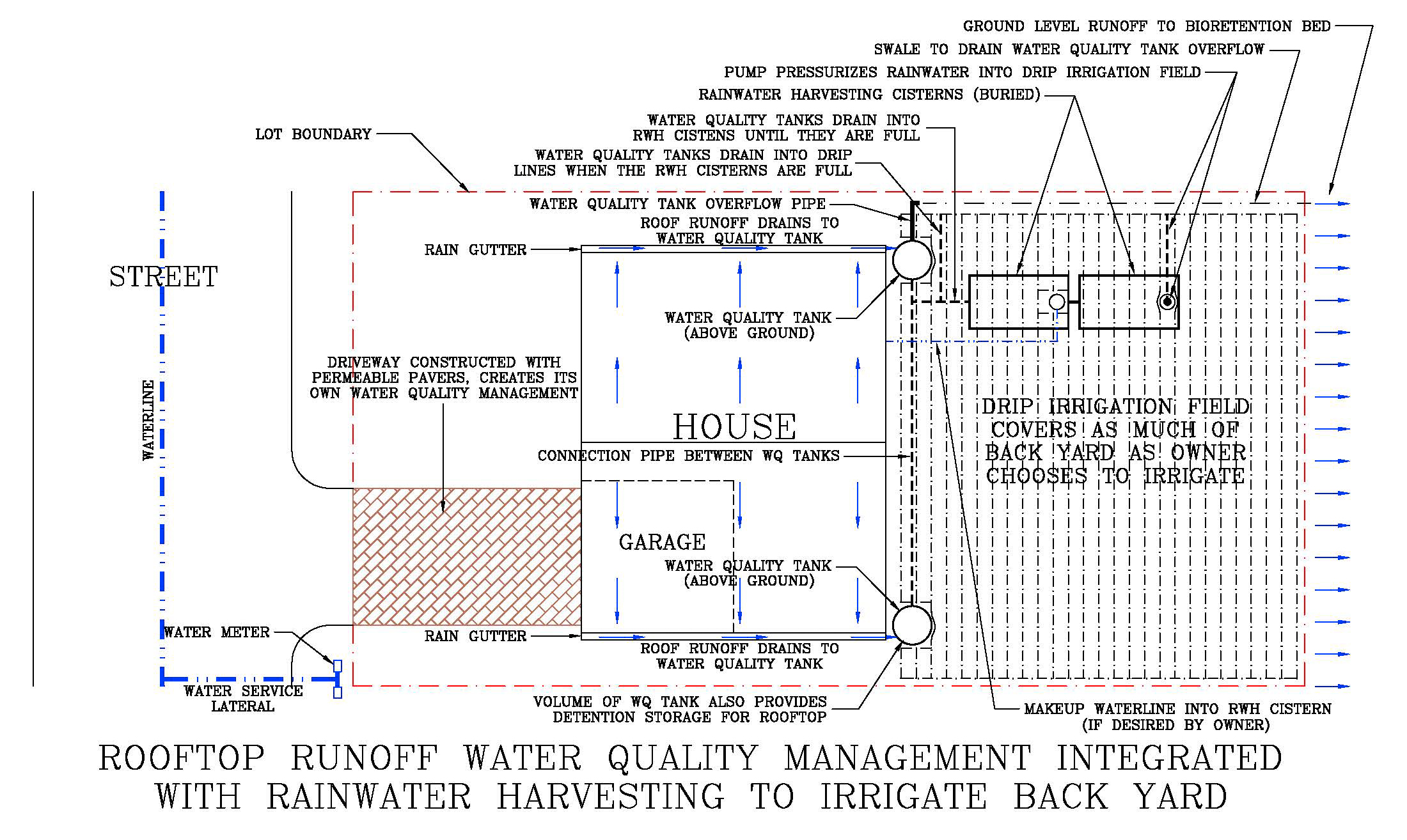

It’s an open question if the water supply situation in and around Dripping Springs is, or will become, so dire that the city would ever seriously consider the extreme costs, and the other complications, of going to DPR. Arguments can be made that increased water supply could be more cost efficiently, and safely, provided locally by building-scale rainwater harvesting, so the actual utility of DPR is questionable. From all indications so far, a DPR system in Dripping Springs is just theoretical. Perhaps not the best argument to ignore anything but a conventional centralized wastewater system, without any regard for the consequences.

So let’s compare the two approaches to expanding the Dripping Springs wastewater system to serve those large outlying developments, to see what those consequences may be. They can be compared on fiscal, societal and environmental grounds.

More Fiscally Reasonable

As reviewed in “This is How We Do It”, the decentralized concept appears to be far more fiscally and economically efficient than the conventional centralized system is projected to be. That analysis was admittedly limited, meant only to be illustrative, and more work is needed to put some meat on that skeleton, but the comparison was stark.

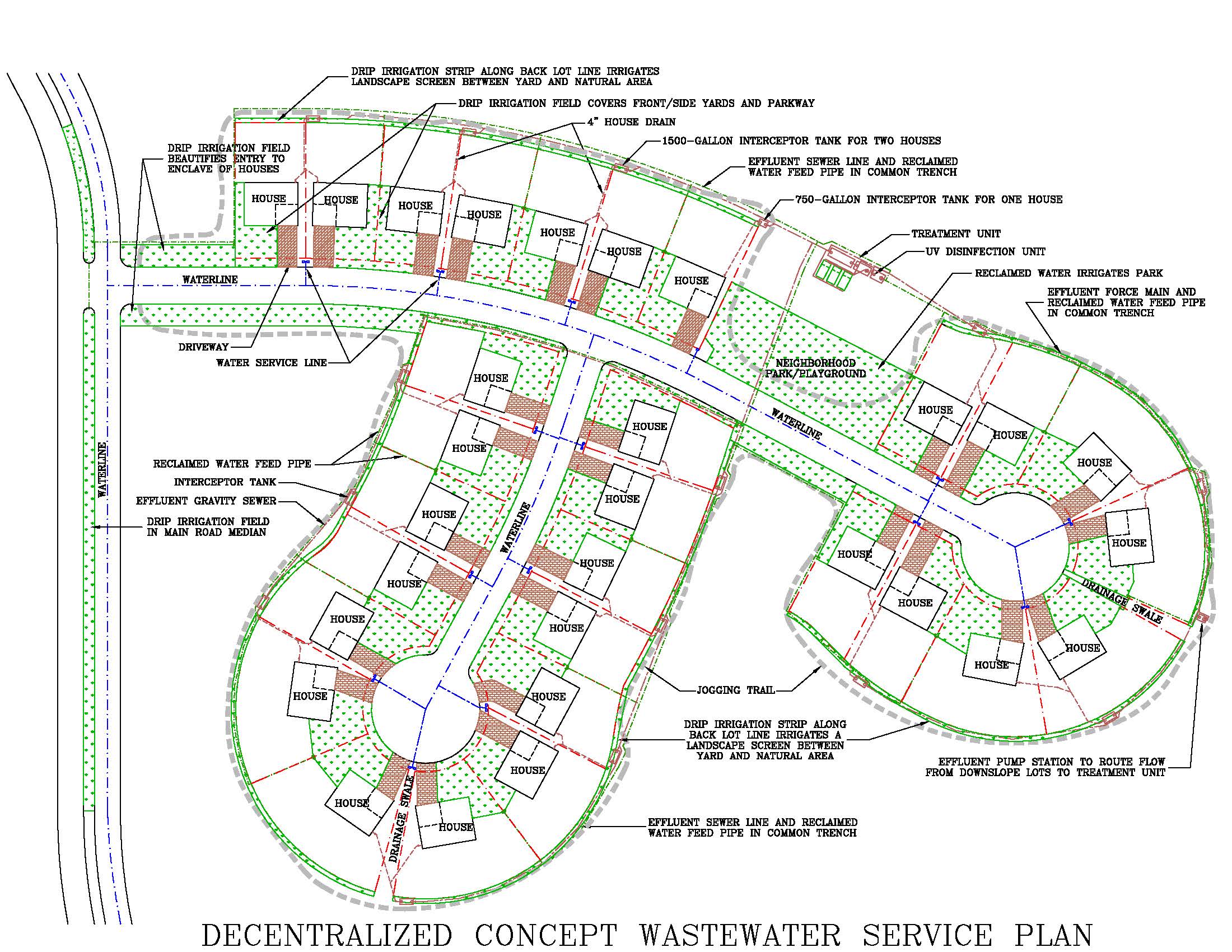

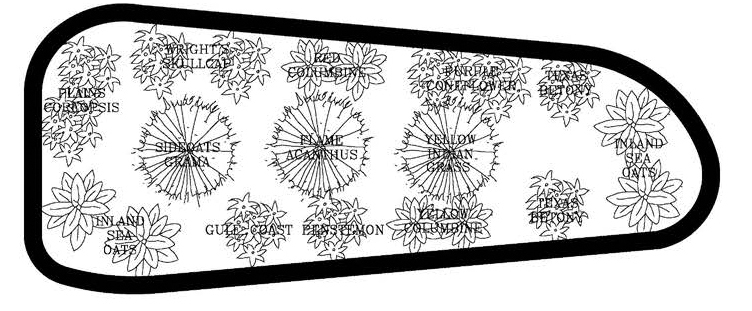

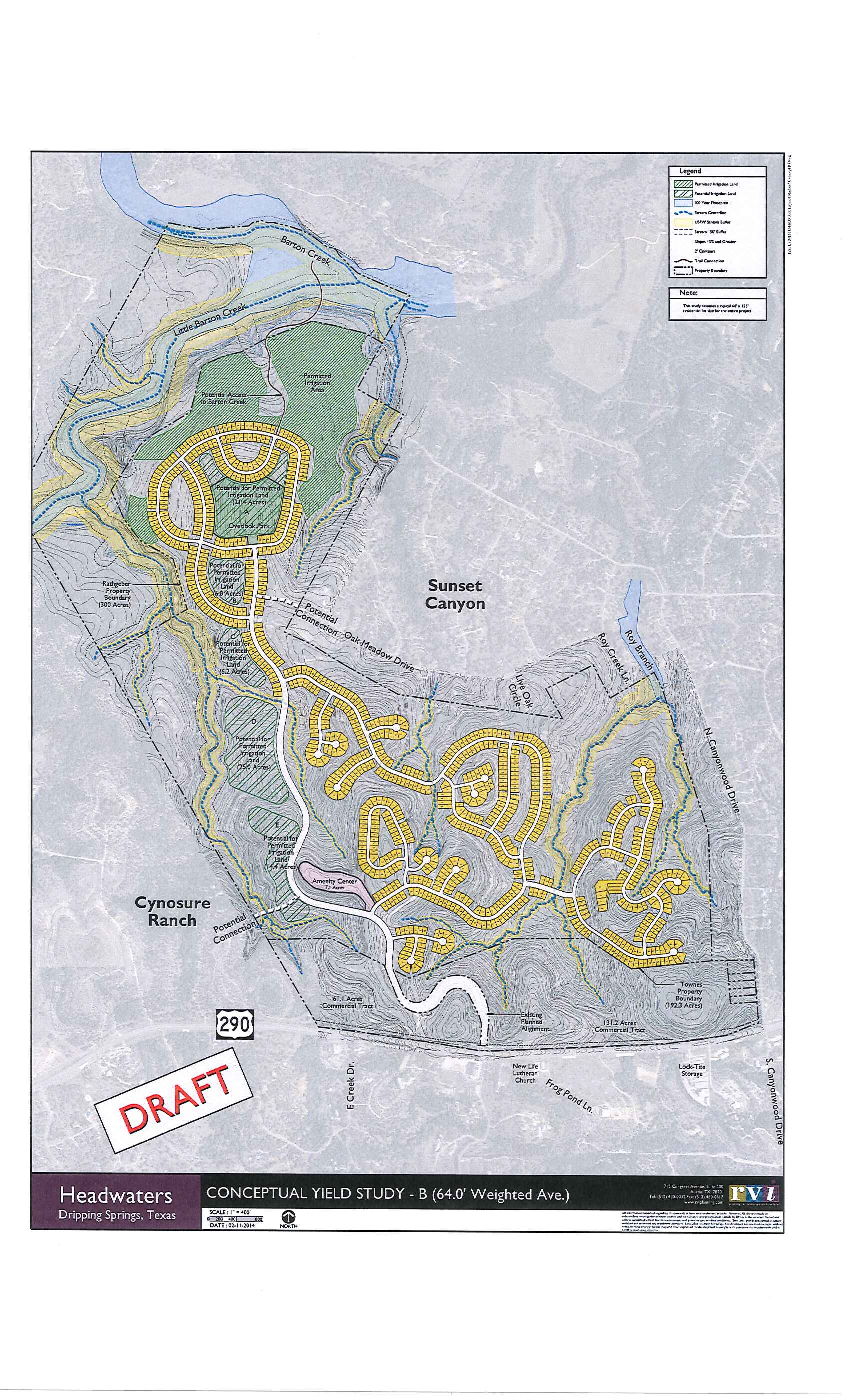

To review, a sketch plan was created – see the figure below – and a cost estimate derived for this decentralized concept strategy in a neighborhood in the Headwaters project, to the east of Dripping Springs. The estimate was $8,000 per house for collection, treatment and redistribution of the reclaimed water throughout the neighborhood, to provide irrigation of front yards, parks/common areas, and parkways. Since these areas would be irrigated in any case, an irrigation system would need to be installed in any case. The drip irrigation fields in those areas would therefore not entail much additional cost. So the estimated global capital cost of the decentralized concept strategy was $8,000 per house, or about $8 million total for the 1,000 houses planned in Headwaters.

[click on image to enlarge]

From the city’s PERP, the estimated cost in 2013 of the “east interceptor”, to convey wastewater from Headwaters to the city’s centralized plant, was $7.78 million. Spread over those 1,000 houses, that yields a cost of $7,780 per house. This one line by itself costs almost as much as was estimated for the complete decentralized concept wastewater system, yet all it does is move the stuff around.

To complete the centralized collection system requires installation of all of the local collector lines, manholes, and lift stations within Headwaters, an additional cost likely north of $10 million. Then there would also be a buy-in cost for a share of the treatment capacity at the centralized plant, likely a few million more. So it seems pretty clear that the basic conventional centralized strategy would be more than double the cost of the decentralized concept strategy, which is again a complete system, including reuse. Yet for that much greater cost, they get only collection and treatment, still having to pay for any reuse of this water.

One wonders, in what other arena would the prospect of getting more function for less than half the cost not be compelling? But in this arena, that prospect does not seem to even be noticed!

Dripping Springs insists that little, if any, of the water would be discharged; instead facilities would be installed to route it to irrigation reuse. That would entail pump stations, transmission mains, storage facilities, distribution lines within the areas where the water would be irrigated, and the irrigation systems. What all this would cost has apparently not been addressed; as noted, the city’s latest PERP is utterly silent on this. To just get the water back to Headwaters, for example, would likely entail a cost similar to the “east interceptor”. Clearly, the cost of enabling reuse under the disposal-centric centralized infrastructure model would be much greater than the cost to integrate reuse into the very fabric of development, as the decentralized concept does.

Then too, the energy demands of the decentralized concept system would be much lower than for the centralized system. The multiple distributed treatment units would use less energy in total than would be required to run the centralized activated sludge treatment plant. Little if any energy would be required to pump wastewater to those distributed units. In contrast, wastewater would run through multiple lift stations to get to the centralized plant. The tight-looped distributed reuse system would require little energy, as the water would only be pumped short distances. In the long-looped centralized strategy, much more energy would be expended to get water from the centralized plant to far-flung points of reuse. These energy savings also impart a fiscal advantage to the decentralized concept.

As it is turning out, however, that analysis of Headwaters is “theoretical” because it appears that Dripping Springs is no longer planning to install the “east interceptor” and run the wastewater from Headwaters to its centralized treatment plant. It appears they will also not build the “west interceptor” to run Scenic Greens, another major development in the city’s hinterlands, to its centralized plant. Instead these developments will be left to implement and independently run stand-alone wastewater systems.

Still, the analysis of that Headwaters neighborhood is indicative of what may be generally expected in any of the outlying developments that Dripping Springs does include in its centralized system. So it remains a general indication of how the decentralized concept would be more fiscally reasonable, likely far more so.

The wastewater systems within Headwaters and Scenic Greens are presently planned to themselves be smaller-scale disposal-centric conventional centralized systems. Effluent will likely be run to “waste areas” within the development rather than to areas that would be irrigated in any case – “land dumping” this water resource. These satellite treatment plants are exactly what Dripping Springs has asserted they are centralizing to avoid, thus the decision to not include Headwaters has societal dimensions to it. Leading us to …

More Societally Responsible

Dripping Springs will face a clear temptation to “cut corners” on the centralized reuse program that’s just appended on to an otherwise “disposal” focused system exactly because it will cost them so much. But under the decentralized concept, reuse will be practically maximized, most cost efficiently, because it’s designed into the development, serving the local and regional water economy well just as a matter of course, no further effort or expense required.

A decentralized concept system would be inherently simpler to plan and finance. Each distributed system would serve a small area, a neighborhood, to be built out in short order. Contrast this with planning large-scale facilities over an area-wide system, with much less definite growth projections.

And because investments are so focused, the costs of planning, designing and implementing the wastewater infrastructure could be readily “assigned” to those who directly benefit from that development – the developer would directly fund the building of those distributed systems. Unlike the conventional centralized system, which is typically financed by loans and bonds, spreading the costs among the whole of the city’s citizenry and/or ratepayer base. So the decentralized concept could be more equitably financed; existing residents would not be compelled to be the “bank” for development.

Then there’s the “time value of money”. With distributed systems, only the infrastructure needed to serve imminent development would be installed, neighborhood by neighborhood, so cost would closely track actual service needs. In the conventional centralized system, on the other hand, facilities that will not be fully utilized for years to come are routinely installed; dollars paid today for something you don’t need for years, foregoing all other investments that money could fund in the meantime. In Headwaters, for example, buildout is expected to take years, but to centralize it, the “east interceptor” and associated lift stations, sized for that ultimate flow, would have to be installed up front of serving the first house.

And this is all money “at risk”. If, for example, we were to experience another “crash” such as occurred in 2008, the pace of development might slow down, even stop altogether for a time. But once the money is borrowed and the system built, the payments would be due whether development came on line to fund those payments or not. So whoever financed that infrastructure would be “on the hook” to make those payments. If these facilities were publicly financed, it would be all of the ratepayers, and/or taxpayers, who would be called upon to pony up. This could balloon their wastewater rates and/or tax bills. All that would be avoided under a decentralized concept strategy, which assigns that risk to the developer, who would be putting relatively small amounts at risk at a time.

If the management needs of each area were considered independently, there would be no need for a “one size fits all” approach. But the conventional centralized system is a one-trick pony; either an area is sewered and the “waste” water is piped “away”, or – sorry, that’s the one trick – it’s left unmanaged. Under a decentralized concept strategy, the needs of each area can be considered independently. Some areas might be connected to an existing centralized system, some areas may have distributed systems, some areas may use individual on-lot systems, with all of those systems under unified area-wide management. So one management entity could accommodate each development in the most cost efficient manner, with systems best suited to the characteristics of the area and the type of development planned for it.

This would eliminate the “balkanization” of wastewater management Dripping Springs said it’s centralizing to avoid, not wanting a bunch of independent operators running systems around it – or installing unmanaged on-lot systems. On its present course, however, balkanization is just what will happen. Having had to abandon centralizing Headwaters and Scenic Greens, each relatively close in to Dripping Springs proper, highlights that it’s clearly a pipe dream to centralize the whole of Dripping Springs’ far-flung extraterritorial jurisdiction. They will continue to accept a proliferation of independent operators and unmanaged wastewater systems. Under the decentralized concept, they wouldn’t have to; they could manage it all, effectively and cost efficiently.

Independent systems could also be required for any “industrial” wastewater generators that might locate within the service area. Each such generator could be required to tailor its treatment to the characteristics of its wastewater flow. And also to the reuse opportunities inherent in the operation at hand, or that may be offered by co-located activities.

The decentralized concept is inherently growth-neutral. Each distributed system serves only a limited area of known imminent development. The centralized system, however, creates large-scale infrastructure covering an area that would grow over time. Since this infrastructure needs to be installed, and financed, up front of any development over that larger area, that creates an impetus for growth, indeed for higher intensity growth, to pay for those large-scale facilities. The infrastructure funding “tail” is allowed to wag the pace and nature of development “dog”.

The “out of sight, out of mind” nature of the conventional centralized system, taking the water far, far away from the neighborhoods where it’s generated, has at times resulted in wastewater management failing to get adequate funding to do the job well. Many cities, MUDs, etc., have a story or two about that. But with the system right there in the neighborhood, there would be constant vigilance to assure that proper management effort is always applied, that adequate funding to maintain the system is always provided. Of course, some may question if keeping the wastewater in the neighborhood is an undue “hazard”. But as reviewed in “This is How We Do It”, these distributed systems would be rather less likely to create any problems than the wastewater systems now routinely used in hinterlands developments, which do not seem to be causing much alarm, so that objection is rather disingenuous.

A little noticed feature of the decentralized concept, the system could readily accommodate any level of water conservation found to be desirable, or necessary, in the future. Employing the effluent sewer concept, the “big chunks” are retained in the interceptor tanks, and only liquid effluent is conveyed to the treatment centers. So cutting the flow, no matter how drastically, would not cause any problems in these collection lines. In conventional sewers, on the other hand, if “too much” water conservation were practiced, the sewers would be “starved” of the liquid flow needed to move solids through the lines. During severe droughts, some utilities have had to haul in water to flush sewer lines because the wastewater generators were “too good” at cutting their water use. Stagnation of sewer flows can cause a buildup of hydrogen sulfide in the sewers. That’s a potentially deadly hazard to sewer workers and can degrade sewer system components. Another whole field of risk that would be completely avoided under the decentralized concept.

Another societal issue is vulnerability to pollution, an inherent quality of the type of wastewater system being used. This leads us to a consideration of the differences in environmental impacts between the two infrastructure models.

More Environmentally Benign

Scale is a major driver of environmental vulnerability. In the conventional centralized system, large flows run through one pipe or one lift station or one treatment plant, so the consequences of any mishap – like a line break, power outage, flow surge, flood damage – are potentially “large”. With distributed systems, flows at any point in the system remain “small”, thus the potential consequences of any mishap remain “small”. Eliminating all of the large-scale collection lines outside the neighborhoods, the decentralized system has shorter runs of smaller pipes, minimizing vulnerability. Then too, with a distributed system, any mishap that may occur would only affect a small part of the overall system. All the other independent distributed systems would not be impacted at all.

In any case, decentralized concept infrastructure would be much less likely to experience problems to begin with. Effluent sewers are built “tight”, with no manholes, and there’s short runs of small pipes, so infiltration/inflow and exfiltration/overflows would be somewhere between minimal and non-existent, while conventional sewers are inherently leak-prone, typically leaking more as they age. Decentralization also minimizes, perhaps can eliminate, pump stations in the collection system, removing a major source of (sometimes major) bypasses that plague centralized systems.

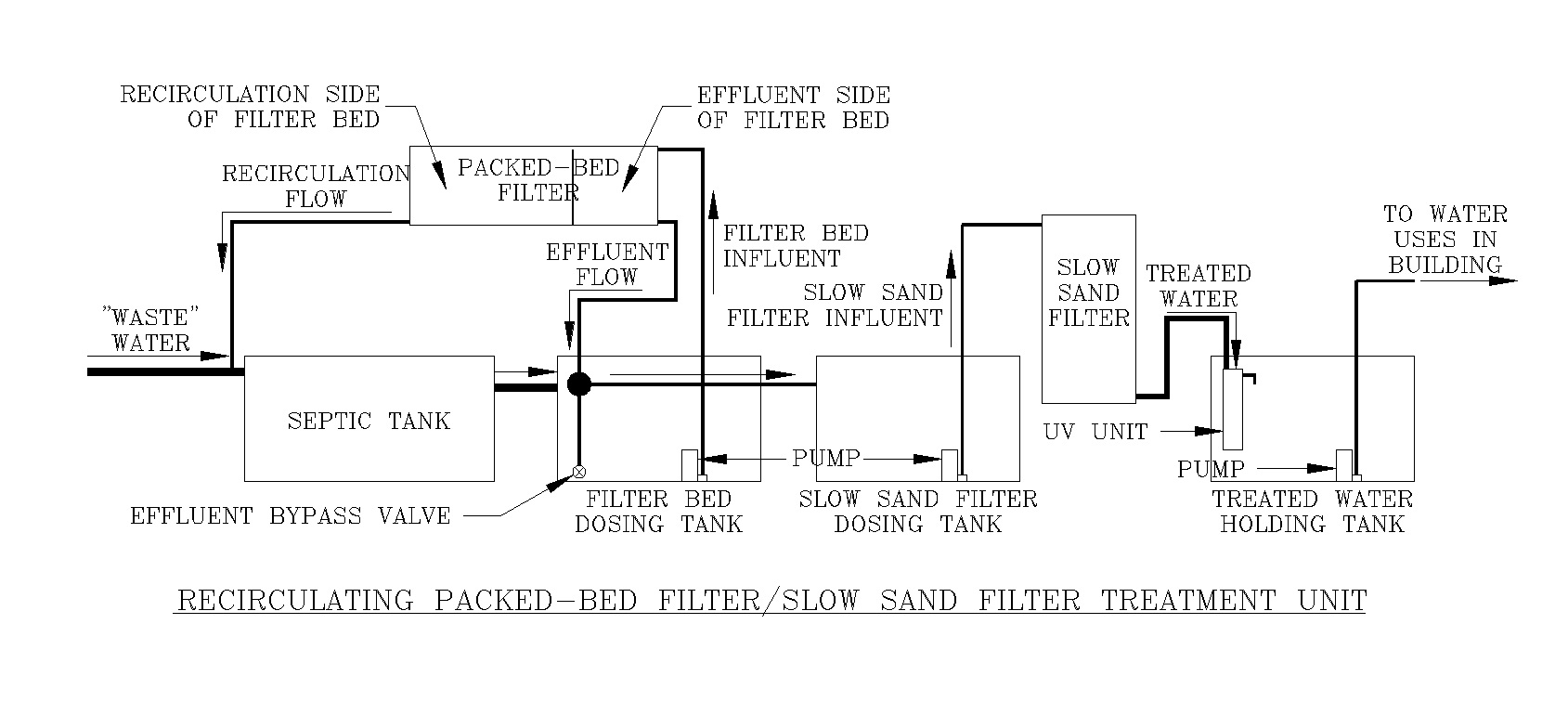

The distributed treatment unit employs a highly stable, very robust technology – the high performance biofiltration concept – which is highly resistant to upsets and, by the very nature of how it’s built and operates, does not allow bypassing of untreated wastewater. The conventional centralized plant, employing the inherently unstable activated sludge technology, is a point of high vulnerability where any sort of mishap, flow surge, etc., could lead to a bypass or poorly treated water running freely on through the treatment plant.

In a conventional centralized system, the larger sewers typically have to run in the lowest topography, the riparian zones. Thus these areas are torn up to install the sewers, and often to repair or upgrade them, creating environmental vulnerability. In the decentralized system, since flows are not highly aggregated, riparian areas can typically be avoided, eliminating this vulnerability.

Also, with the collection lines being small and shallowly buried, far less disruption is entailed when installing the sewer lines, wherever they are located, and reclaimed water distribution lines can typically be laid in the same trench, making their installation non-disruptive. Since the system would be expanded by adding new distributed systems rather than by routing ever more flow to existing treatment centers, there would never be a need to upgrade collection lines, eliminating that on-going disruption.

All these factors impart a far lower vulnerability to environmental degradation with a decentralized concept system. Indeed, centralization is a “vulnerability magnet”, gathering the stuff from far and wide to one point, where again any mishap can cause “large” impacts.

Backward – or Forward?

Carrying the promise of being (far) more fiscally reasonable, more societally responsible, and more environmentally benign than the “disposal” focused 19th century conventional centralized infrastructure model, it is nothing less than a water management tragedy that Dripping Springs, and the developers of the large projects around the city, will not consider the 21st century decentralized concept infrastructure model. Preferring the “comfort” of the familiar, they will extend and perpetuate that 19th century model, incurring the high costs of implementing it and the even higher costs of appending on at the end of the big pipe a far-flung reuse system, along with all the societal and environmental ills it entails. Since this infrastructure has a service life of several decades, this retreat into the past will cement in place an infrastructure model that may hamstring progressive water management in this area for generations to come.

This is a tragedy that local society does not have to endure. It merely requires the boldness and wisdom to move forward, instead of backward. To explore the full range of options, of infrastructure models, that the city and the surrounding developers have at their disposal. Their refusal to do so is a free choice. There are no imperatives “forcing” them to forego such an examination, not fiscally, nor societally, nor environmentally – as just reviewed, all those factors highly favor the decentralized concept. And not regulatorily. TCEQ has confirmed the decentralized concept can be readily permitted.

There is nothing really new here. This is just a re-framing, in the current context, of the forward-looking ideas, ideals, concepts and principles that have been set out for society’s consideration for decades. Perhaps here and now, with the “urgency” of an Onion Creek discharge in the mix, society will chose to act on it.

Indeed the question is, will we continue to fall backward, or move forward?